Zero-knowledge proofs (ZKPs) represent one of the most fascinating and powerful concepts in modern cryptography. Building upon your existing knowledge of hash functions and Merkle trees, this report delves into the intricate world of ZKPs, exploring how they enable one party to prove knowledge of a specific piece of information without revealing what that information actually is. This cryptographic breakthrough allows for verification without disclosure, creating new possibilities for privacy-preserving systems in our increasingly digital world.

Zero-knowledge proofs, first conceived in 1985 by Shafi Goldwasser, Silvio Micali, and Charles Rackoff, provide a method for one party (the prover) to convince another party (the verifier) that a statement is true without revealing any additional information beyond the validity of the statement itself. This seemingly paradoxical capability addresses a fundamental question: how can you prove you know something without showing what that something is?

The core innovation of ZKPs lies in their ability to separate the verification of knowledge from the disclosure of that knowledge. Traditional authentication methods typically require revealing sensitive information—like a password—to verify identity. ZKPs, however, enable verification without requiring this disclosure, fundamentally transforming our approach to authentication, identity verification, and privacy-preserving computations. This separation becomes especially powerful when combined with your existing understanding of cryptographic primitives like hash functions and data structures like Merkle trees.

In their original paper, Goldwasser, Micali, and Rackoff described this revelation as "surprising" because it showed that "adding interaction to the proving process may decrease the amount of knowledge that must be communicated in order to prove a theorem". This insight opened up entirely new avenues in cryptographic research and application development that continue to expand today.

For a protocol to qualify as a zero-knowledge proof, it must satisfy three critical properties that ensure its security, reliability, and privacy guarantees:

Completeness ensures that if the statement being proven is true and both parties follow the protocol honestly, the verifier will be convinced of the truth. This property guarantees that valid proofs are always accepted by an honest verifier, ensuring the system's functional reliability. Without completeness, a legitimate prover with valid knowledge might fail to convince the verifier, rendering the system unusable.

Soundness mandates that no dishonest prover can convince an honest verifier that a false statement is true, except with negligible probability. This property protects against fraud and ensures that the verification process maintains its integrity. The soundness property usually allows for a small probability of error, known as the "soundness error," making ZKPs probabilistic rather than deterministic proofs. However, this error can be made negligibly small through protocol design.

The zero-knowledge property, the most distinctive aspect of ZKPs, ensures that the verifier learns nothing beyond the validity of the statement being proved. This means that the verification process reveals no additional information about the prover's secret knowledge. Mathematically, this is formalized by demonstrating that every verifier has some simulator that, given only the statement to be proved (without access to the prover), can produce a transcript indistinguishable from an actual interaction between the prover and verifier.

Together, these three properties create a framework that enables secure verification without compromising sensitive information, forming the foundation upon which all zero-knowledge protocols are built.

To grasp the concept of zero-knowledge proofs more intuitively, several analogies have become standard in explaining how one can prove knowledge without revealing it.

One of the most accessible ways to understand zero-knowledge proofs is through the "Where's Waldo" analogy. Imagine you've found Waldo in a busy illustration and want to prove this to someone without revealing his exact location. You take a large piece of cardboard with a small Waldo-sized hole cut in it, place it over the image so that only Waldo is visible through the hole, and show it to the verifier. The verifier now knows you've found Waldo without learning where in the image he's located.

This example demonstrates the zero-knowledge property elegantly: you've proven your knowledge (finding Waldo) without revealing the information itself (Waldo's location). The completeness property is satisfied because an honest prover who has found Waldo can always demonstrate this fact. The soundness property is maintained because if you haven't actually found Waldo, you cannot successfully position the cardboard to show him through the hole.

As noted in the search results, this analogy isn't perfect—it does reveal some information about Waldo's appearance—but it effectively illustrates the core concept of proving knowledge without full disclosure.

Moving to a more technical example, consider how zero-knowledge proofs can verify blockchain address ownership. Alice wants to prove to Bob that she owns a particular blockchain address without revealing her private key. Bob can encrypt a message with Alice's public key, which only Alice can decrypt using her private key. Alice then returns the decrypted message to Bob.

If Alice successfully decrypts the message, Bob can be confident that she owns the private key associated with the public address. The completeness property is satisfied because Alice, knowing her private key, can always decrypt messages encrypted with her corresponding public key. The soundness property holds because without the private key, an impostor cannot decrypt the message. Most importantly, the zero-knowledge property is maintained because Alice never reveals her private key during this exchange, only demonstrating her ability to use it.

This process can be repeated with different messages to reduce the probability of lucky guesses to negligible levels, strengthening the soundness of the proof. This example demonstrates how zero-knowledge proofs leverage asymmetric cryptography in practical applications while maintaining privacy.

Zero-knowledge proofs are rigorously defined within computational complexity theory, using the language of interactive Turing machines to establish their properties and security guarantees.

A formal definition of zero-knowledge uses computational models, most commonly Turing machines. Let P, V, and S be Turing machines representing the prover, verifier, and simulator respectively. An interactive proof system with (P, V) for a language L is zero-knowledge if for any probabilistic polynomial time (PPT) verifier $$\hat{V}$$ there exists a PPT simulator S such that:

$$\forall x\in L, z \in {0,1}^{*}, \operatorname{View}_{\hat{V}}[P(x) \leftrightarrow \hat{V}(x,z)] = S(x,z)$$

where View~$$\hat{V}$$~[P(x)↔$$\hat{V}$$(x,z)] represents a record of the interactions between P(x) and V(x,z). The auxiliary string z represents prior knowledge, including the random coins of $$\hat{V}$$.

This definition formalizes the intuition that the verifier gains no additional knowledge from the interaction with the prover beyond what could be simulated without such interaction. In other words, anything the verifier could learn from the interaction, they could have computed themselves without the prover's involvement, meaning no actual knowledge is transferred during the verification process.

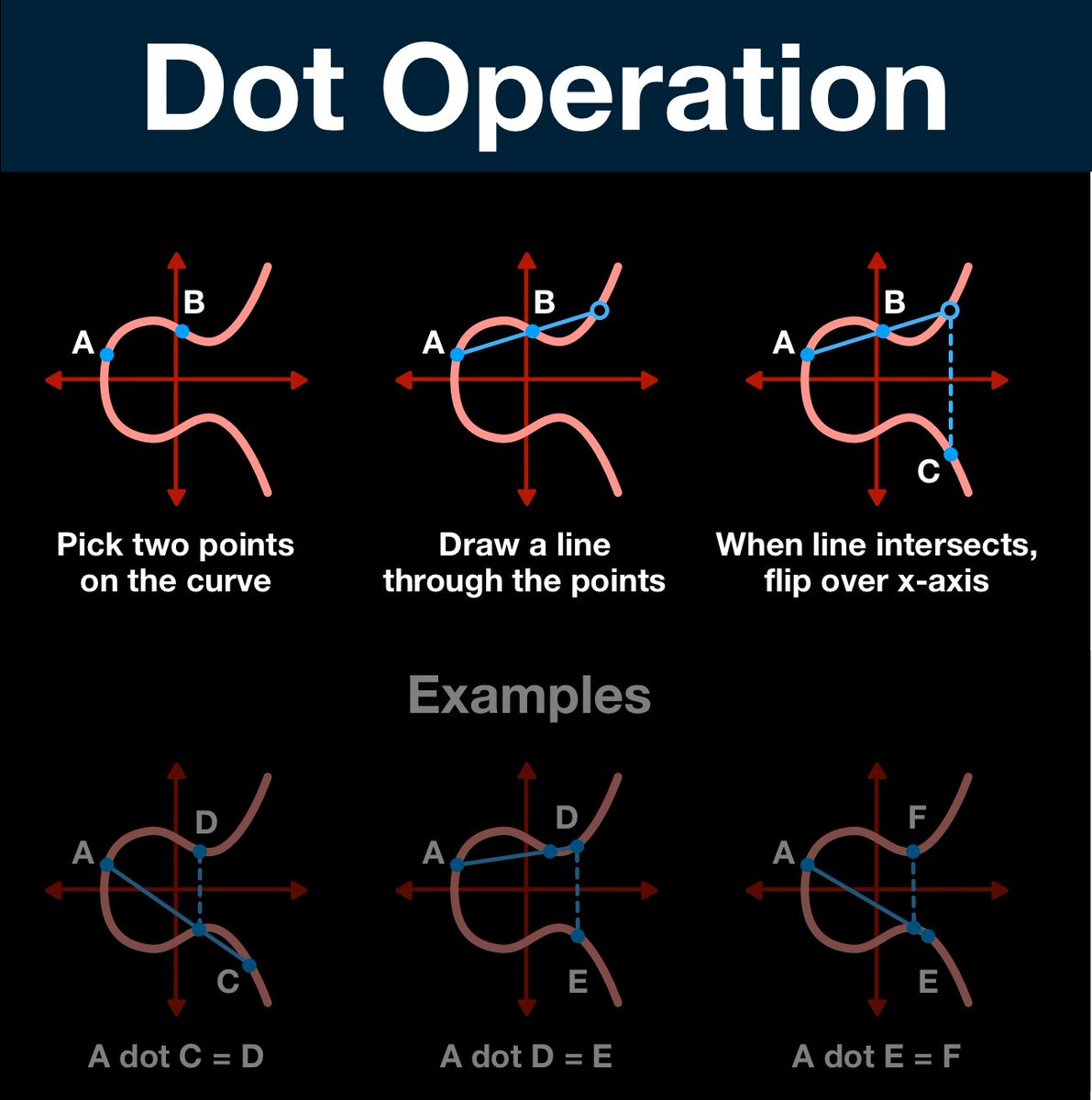

The security of many zero-knowledge protocols relies on a challenge-response mechanism. The verifier issues a random challenge to the prover, who must then provide an appropriate response based on their secret knowledge. This challenge value introduces randomness that prevents the prover from using pre-computed responses.

For example, consider a simple mathematical scenario where Alice wants to prove she knows a secret value x for the function f(x) = x²+1. Bob issues a challenge value c=3. If Alice knows the secret (let's say x=2), she computes r=f(x)=5 and sends this to Bob. Bob then verifies whether r matches f(c)=c²+1=10. Since 5≠10, Bob knows Alice's claim is false.

This challenge-response approach is at the heart of many interactive zero-knowledge protocols, ensuring that provers must actually possess the claimed knowledge rather than simply replaying predetermined responses.

Given your familiarity with hash functions and Merkle trees, it's important to understand how zero-knowledge proofs build upon and extend these cryptographic primitives.

Hash functions play a central role in many zero-knowledge proof systems. Their one-way nature makes them ideal for committing to values without revealing them. For example, a prover can demonstrate knowledge of a preimage r for a hash H(r) without revealing r itself.

In a simple scenario, if both parties agree on a hash function like SHA-256, the prover can construct a proof that they know an input r such that SHA-256(r) equals a specific output hash, without revealing what r is. This allows for verification of knowledge without disclosure of the sensitive information itself.

The security of such proofs relies on the collision resistance and preimage resistance properties of cryptographic hash functions—properties you're already familiar with—making them natural building blocks for zero-knowledge systems.

Your understanding of Merkle trees provides an excellent foundation for grasping more complex zero-knowledge applications. Merkle trees are fundamental data structures in many ZKP systems, enabling efficient proofs of membership and other properties.

In identity systems, for example, Merkle trees can store user claims while allowing selective disclosure through zero-knowledge proofs. A user can prove they possess a valid claim that exists within a Merkle tree (whose root hash might be publicly available) without revealing which specific claim they're proving or any other claims in the tree.

By combining Merkle proofs with zero-knowledge techniques, systems can verify that certain data exists within a cryptographically secured structure without exposing the data itself. This creates powerful privacy-preserving verification mechanisms that build directly upon the Merkle tree concept.

The marriage of zkSNARKs (Zero-Knowledge Succinct Non-Interactive Arguments of Knowledge) with Merkle proofs creates particularly powerful verification systems. These combined techniques allow for non-disclosing membership proofs with strong privacy guarantees.

For instance, a user could prove they are on an allowlist (represented as a Merkle tree) without revealing their identity or position within that list. The zkSNARK component ensures this proof remains zero-knowledge, while the Merkle proof aspect provides efficient verification.

This combination leverages your existing knowledge of Merkle trees while extending their capabilities through zero-knowledge techniques, enabling applications that would be impossible with Merkle trees alone.

Zero-knowledge proofs come in various forms, each with distinct characteristics and applications. Understanding these varieties helps in selecting the appropriate approach for specific use cases.

Early zero-knowledge proofs were interactive, requiring multiple rounds of communication between prover and verifier. In these systems, the verifier issues challenges to which the prover must respond correctly. This interaction helps establish the verifier's confidence in the proof through repeated testing.

However, for many applications, interactivity is impractical. Non-interactive zero-knowledge proofs (NIZKs) solve this by allowing the prover to generate a single proof that anyone can verify without further interaction. NIZKs typically use a common reference string or some other setup mechanism to enable this non-interactivity, making them more suitable for blockchain and other distributed applications where direct interaction may be impractical.

zk-SNARKs have gained significant attention, particularly in blockchain applications, for their combination of zero-knowledge with succinctness. The "succinct" property means that proofs are small in size and quick to verify, making them practical for resource-constrained environments.

A key characteristic of zk-SNARKs is their reliance on a trusted setup phase. This initial ceremony generates parameters that must be properly destroyed afterward to ensure the system's security. If these parameters are compromised, someone could potentially create false proofs without actually possessing the knowledge being proven.

Despite this setup requirement, zk-SNARKs' efficiency has made them popular in privacy-focused cryptocurrencies and other applications where compact proofs are valuable.

Zero-Knowledge Scalable Transparent Arguments of Knowledge (zk-STARKs) represent another important variant that addresses some limitations of zk-SNARKs. STARKs eliminate the need for a trusted setup, making them "transparent." They also offer protection against quantum computing attacks, unlike SNARKs which rely on elliptic curve cryptography.

The trade-off is that STARK proofs are typically larger than SNARK proofs, making them less suitable for highly constrained environments. However, their post-quantum security properties and transparency make them attractive for many applications.

Other variants include Bulletproofs (which also avoid trusted setups while achieving relatively compact proofs) and various specialized constructions optimized for specific applications, each offering different trade-offs in terms of proof size, verification time, setup requirements, and security assumptions.

The unique properties of zero-knowledge proofs enable numerous applications that require verification without compromising privacy.

Identity systems represent a natural application for zero-knowledge proofs. Traditional identity verification often requires revealing more information than necessary—showing your entire driver's license to prove you're of legal drinking age, for example.

Zero-knowledge proofs allow for selective disclosure, where users can prove specific attributes about their identity without revealing unnecessary details. For instance, using ZKPs, a person could prove they are over 21 without revealing their exact birthdate or any other information on their ID.

These systems typically leverage Merkle trees to store claims about users, with ZKPs enabling users to prove possession of specific claims without revealing which claim they're proving. This architecture supports privacy-preserving identity verification at scale.

Financial privacy represents another critical application area. Zero-knowledge proofs enable transactions where the sender, receiver, and amount remain confidential while still ensuring the transaction's validity.

For example, a user could prove they have sufficient funds for a transaction without revealing their account balance. Similarly, in confidential computing scenarios, organizations can prove computations were performed correctly on sensitive data without exposing the data itself, enabling secure multi-party computation while preserving data privacy.

Zero-knowledge proofs transform authentication by eliminating the need to transmit or store sensitive credentials. Rather than sending a password to a server for verification, a user can prove knowledge of the password without ever transmitting it.

This approach eliminates the risk of password theft during transmission and reduces the impact of server-side data breaches, as servers never need to store the actual authentication secrets. The challenge-response mechanisms inherent in many ZKP systems naturally support this authentication model.

Zero-knowledge proofs offer a compelling solution to the tension between regulatory compliance and privacy. Organizations can prove compliance with regulatory requirements without exposing sensitive underlying data.

For instance, a financial institution could prove that all its transactions comply with anti-money laundering rules without revealing the specific transactions or customer details. This capability enables regulatory oversight while maintaining confidentiality for both the institution and its customers.

While zero-knowledge proofs offer powerful capabilities, implementing them effectively requires addressing several practical considerations and challenges.

A significant challenge in deploying zero-knowledge proofs is their computational intensity. Generating proofs often requires substantial computational resources, making them potentially impractical for resource-constrained environments or real-time applications.

Recent advances have significantly improved performance, but ZKPs remain more computationally demanding than simpler cryptographic techniques. Implementation decisions must carefully balance security needs against performance requirements, particularly in consumer-facing applications where user experience concerns are paramount.

Zero-knowledge proof systems vary in their security assumptions and trust requirements. Some require trusted setups, where compromise could undermine the entire system, while others have different security trade-offs.

Implementing ZKPs securely requires careful consideration of the specific security properties needed for a given application and selection of the appropriate ZKP variant. Additionally, the surrounding system architecture must be designed to avoid undermining the ZKP's security guarantees through side-channel attacks or implementation flaws.

The relative novelty of practical zero-knowledge proof systems means standardization remains incomplete. Different implementations may use incompatible approaches, limiting interoperability between systems.

As the technology matures, standardization efforts are emerging, but implementers currently face choices between established but potentially limiting standards and newer, more capable approaches that may lack broad adoption. This tension requires careful navigation based on specific project requirements and risk tolerance.

Zero-knowledge proofs represent a profound advancement in cryptography, enabling verification without disclosure in ways that were once thought impossible. Building on your existing knowledge of hash functions and Merkle trees, ZKPs extend these foundational cryptographic primitives to create powerful new capabilities for privacy-preserving systems.

The field continues to evolve rapidly, with new constructions offering improved efficiency, security properties, and application possibilities. As computational techniques advance and implementation experience grows, we can expect zero-knowledge proofs to become increasingly practical for mainstream applications, potentially transforming how we approach authentication, privacy, and verification across digital systems.

Understanding the principles, varieties, and applications of zero-knowledge proofs provides a foundation for leveraging these powerful techniques in building the next generation of privacy-preserving systems. The potential of ZKPs to reconcile the seemingly contradictory goals of verification and privacy makes them one of the most promising technologies for addressing the growing privacy challenges of our digital world.

:max_bytes(150000):strip_icc()/MerkleTree2-9c2dac8d27184403b532663085c0eb90.png)